Neuron Model Inference

The APIs presented in the following documentation are relevant for the inference on inf2, trn1 and inf1.

NeuronModelForXXX classes help to load models from the Model Database Hub and compile them to a serialized format optimized for

neuron devices. You will then be able to load the model and run inference with the acceleration powered by AWS Neuron devices.

Switching from Transformers to Optimum

The optimum.neuron.NeuronModelForXXX model classes are APIs compatible with Model Database Transformers models. This means seamless integration

with Model Database’s ecosystem. You can just replace your AutoModelForXXX class with the corresponding NeuronModelForXXX class in optimum.neuron.

If you already use Transformers, you will be able to reuse your code just by replacing model classes:

from transformers import AutoTokenizer

-from transformers import AutoModelForSequenceClassification

+from optimum.neuron import NeuronModelForSequenceClassification

# PyTorch checkpoint

-model = AutoModelForSequenceClassification.from_pretrained("distilbert-base-uncased-finetuned-sst-2-english")

+model = NeuronModelForSequenceClassification.from_pretrained("distilbert-base-uncased-finetuned-sst-2-english",

+ export=True, **neuron_kwargs)As shown above, when you use NeuronModelForXXX for the first time, you will need to set export=True to compile your model from PyTorch to a neuron-compatible format.

You will also need to pass Neuron specific parameters to configure the export. Each model architecture has its own set of parameters, as detailed in the next paragraphs.

Once your model has been exported, you can save it either on your local or in the Model Database Model Hub:

# Save the neuron model

>>> model.save_pretrained("a_local_path_for_compiled_neuron_model")

# Push the neuron model to HF Hub

>>> model.push_to_hub(

... "a_local_path_for_compiled_neuron_model", repository_id="my-neuron-repo", use_auth_token=True

... )And the next time when you want to run inference, just load your compiled model which will save you the compilation time:

>>> from optimum.neuron import NeuronModelForSequenceClassification

>>> model = NeuronModelForSequenceClassification.from_pretrained("my-neuron-repo")As you see, there is no need to pass the neuron arguments used during the export as they are

saved in a config.json file, and will be restored automatically by NeuronModelForXXX class.

Discriminative NLP models

As explained in the previous section, you will need only few modifications to your Transformers code to export and run NLP models:

from transformers import AutoTokenizer

-from transformers import AutoModelForSequenceClassification

+from optimum.neuron import NeuronModelForSequenceClassification

# PyTorch checkpoint

-model = AutoModelForSequenceClassification.from_pretrained("distilbert-base-uncased-finetuned-sst-2-english")

# Compile your model during the first time

+compiler_args = {"auto_cast": "matmul", "auto_cast_type": "bf16"}

+input_shapes = {"batch_size": 1, "sequence_length": 64}

+model = NeuronModelForSequenceClassification.from_pretrained(

+ "distilbert-base-uncased-finetuned-sst-2-english", export=True, **compiler_args, **input_shapes,

+)

tokenizer = AutoTokenizer.from_pretrained("distilbert-base-uncased-finetuned-sst-2-english")

inputs = tokenizer("Hamilton is considered to be the best musical of human history.", return_tensors="pt")

logits = model(**inputs).logits

print(model.config.id2label[logits.argmax().item()])

# 'POSITIVE'compiler_args are optional arguments for the compiler, these arguments usually control how the compiler makes tradeoff between the inference performance (latency and throughput) and the accuracy. Here we cast FP32 operations to BF16 using the Neuron matrix-multiplication engine.

input_shapes are mandatory static shape information that you need to send to the neuron compiler. Wondering what shapes are mandatory for your model? Check it out

with the following code:

>>> from transformers import AutoModelForSequenceClassification

>>> from optimum.exporters import TasksManager

>>> model = AutoModelForSequenceClassification.from_pretrained("distilbert-base-uncased-finetuned-sst-2-english")

# Infer the task name if you don't know

>>> task = TasksManager.infer_task_from_model(model) # 'text-classification'

>>> neuron_config_constructor = TasksManager.get_exporter_config_constructor(

... model=model, exporter="neuron", task='text-classification'

... )

>>> print(neuron_config_constructor.func.get_mandatory_axes_for_task(task))

# ('batch_size', 'sequence_length')Be careful, the input shapes used for compilation should be inferior than the size of inputs that you will feed into the model during the inference.

- What if input sizes are smaller than compilation input shapes?

No worries, NeuronModelForXXX class will pad your inputs to an eligible shape. Besides you can set dynamic_batch_size=True in the from_pretrained method to enable dynamic batching, which means that your inputs can have variable batch size.

(Just keep in mind: dynamicity and padding comes with not only flexibility but also performance drop. Fair enough!)

Generative NLP models

As explained before, you will need only a few modifications to your Transformers code to export and run NLP models:

Configuring the export of a generative model

There are three main parameters that can be passed to the from_pretrained() method to configure how a transformers checkpoint is exported to

a neuron optimized model:

batch_sizeis the number of input sequences that the model will accept. Defaults to 1,num_coresis the number of neuron cores used when instantiating the model. Each neuron core has 16 Gb of memory, which means that bigger models need to be split on multiple cores. Defaults to 1,auto_cast_typespecifies the format to encode the weights. It can be one off32(float32),f16(float16) orbf16(bfloat16). Defaults tof32.

from transformers import AutoTokenizer

-from transformers import AutoModelForCausalLM

+from optimum.neuron import NeuronModelForCausalLM

# Instantiate and convert to Neuron a PyTorch checkpoint

-model = AutoModelForCausalLM.from_pretrained("gpt2")

+model = NeuronModelForCausalLM.from_pretrained("gpt2",

+ export=True,

+ batch_size=16,

+ num_cores=1,

+ auto_cast_type='f32')As explained before, these parameters can only be configured during export. This means in particular that

the batch_size of the inputs during inference should be equal to the batch_size used for exporting the model.

Each model architecture may also support specific additional parameters for advanced configuration. Please refer to the transformers-neuronx documentation.

Text generation inference

As with the original transformers models, use generate() instead of forward() to generate text sequences.

from transformers import AutoTokenizer

-from transformers import AutoModelForCausalLM

+from optimum.neuron import NeuronModelForCausalLM

# Instantiate and convert to Neuron a PyTorch checkpoint

-model = AutoModelForCausalLM.from_pretrained("gpt2")

+model = NeuronModelForCausalLM.from_pretrained("gpt2", export=True)

tokenizer = AutoTokenizer.from_pretrained("gpt2")

tokenizer.pad_token_id = tokenizer.eos_token_id

tokens = tokenizer("I really wish ", return_tensors="pt")

with torch.inference_mode():

sample_output = model.generate(

**tokens,

do_sample=True,

min_length=128,

max_length=256,

temperature=0.7,

)

outputs = [tokenizer.decode(tok) for tok in sample_output]

print(outputs)Stable Diffusion

Optimum extends 🤗Diffusers to support inference on Neuron. To get started, make sure you have installed Diffusers:

pip install "optimum[neuronx, diffusers]"You can also accelerate the inference of stable diffusion on neuronx devices (inf2 / trn1). There are four components which need to be exported to the .neuron format to boost the performance:

- Text encoder

- U-Net

- VAE encoder

- VAE decoder

Text-to-Image

NeuronStableDiffusionPipeline class allows you to generate images from a text prompt on neuron devices similar to the experience with diffusers.

Like for other tasks, you need to compile models before being able to perform inference. The export can be done either via the CLI or via NeuronStableDiffusionPipeline API. Here is an example of exporting stable diffusion components with NeuronStableDiffusionPipeline:

To apply optimized compute of Unet’s attention score, please configure your environment variable with export NEURON_FUSE_SOFTMAX=1.

Besides, don’t hesitate to tweak the compilation configuration to find the best tradeoff between performance v.s accuracy in your use case. By default, we suggest casting FP32 matrix multiplication operations to BF16 which offers good performance with moderate sacrifice of the accuracy. Check out the guide from AWS Neuron documentation to better understand the options for your compilation.

>>> from optimum.neuron import NeuronStableDiffusionPipeline

>>> model_id = "runwayml/stable-diffusion-v1-5"

>>> compiler_args = {"auto_cast": "matmul", "auto_cast_type": "bf16"}

>>> input_shapes = {"batch_size": 1, "height": 512, "width": 512}

>>> stable_diffusion = NeuronStableDiffusionPipeline.from_pretrained(model_id, export=True, **compiler_args, **input_shapes)

# Save locally or upload to the HuggingFace Hub

>>> save_directory = "sd_neuron/"

>>> stable_diffusion.save_pretrained(save_directory)

>>> stable_diffusion.push_to_hub(

... save_directory, repository_id="my-neuron-repo", use_auth_token=True

... )Now generate an image with a prompt on neuron:

>>> prompt = "a photo of an astronaut riding a horse on mars"

>>> image = stable_diffusion(prompt).images[0]

Image-to-Image

With the NeuronStableDiffusionImg2ImgPipeline class, you can generate a new image conditioned on a text prompt and an initial image.

import requests

from PIL import Image

from io import BytesIO

from optimum.neuron import NeuronStableDiffusionImg2ImgPipeline

model_id = "nitrosocke/Ghibli-Diffusion"

input_shapes = {"batch_size": 1, "height": 512, "width": 512}

pipeline = NeuronStableDiffusionImg2ImgPipeline.from_pretrained(model_id, export=True, **input_shapes, device_ids=[0, 1])

pipeline.save_pretrained("sd_img2img/")

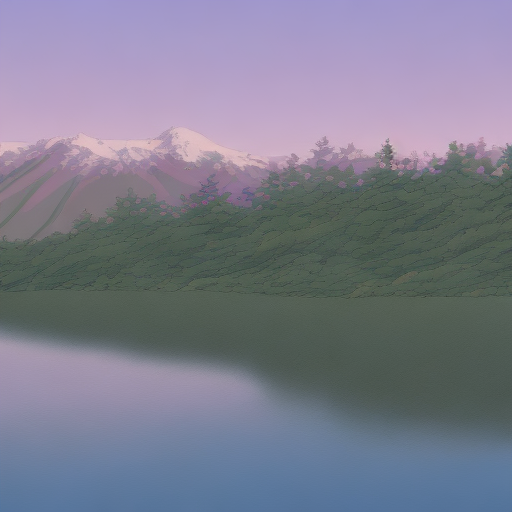

url = "https://raw.githubusercontent.com/CompVis/stable-diffusion/main/assets/stable-samples/img2img/sketch-mountains-input.jpg"

response = requests.get(url)

init_image = Image.open(BytesIO(response.content)).convert("RGB")

init_image = init_image.resize((512, 512))

prompt = "ghibli style, a fantasy landscape with snowcapped mountains, trees, lake with detailed reflection. sunlight and cloud in the sky, warm colors, 8K"

image = pipeline(prompt=prompt, image=init_image, strength=0.75, guidance_scale=7.5).images[0]

image.save("fantasy_landscape.png")image |

prompt |

output | |

|---|---|---|---|

|

ghibli style, a fantasy landscape with snowcapped mountains, trees, lake with detailed reflection. warm colors, 8K |  |

Inpaint

With the NeuronStableDiffusionInpaintPipeline class, you can edit specific parts of an image by providing a mask and a text prompt.

import requests

from PIL import Image

from io import BytesIO

from optimum.neuron import NeuronStableDiffusionInpaintPipeline

model_id = "runwayml/stable-diffusion-inpainting"

input_shapes = {"batch_size": 1, "height": 512, "width": 512}

pipeline = NeuronStableDiffusionInpaintPipeline.from_pretrained(model_id, export=True, **input_shapes, device_ids=[0, 1])

pipeline.save_pretrained("sd_inpaint/")

def download_image(url):

response = requests.get(url)

return Image.open(BytesIO(response.content)).convert("RGB")

img_url = "https://raw.githubusercontent.com/CompVis/latent-diffusion/main/data/inpainting_examples/overture-creations-5sI6fQgYIuo.png"

mask_url = "https://raw.githubusercontent.com/CompVis/latent-diffusion/main/data/inpainting_examples/overture-creations-5sI6fQgYIuo_mask.png"

init_image = download_image(img_url).resize((512, 512))

mask_image = download_image(mask_url).resize((512, 512))

prompt = "Face of a yellow cat, high resolution, sitting on a park bench"

image = pipeline(prompt=prompt, image=init_image, mask_image=mask_image).images[0]

image.save("cat_on_bench.png")image |

mask_image |

prompt |

output |

|---|---|---|---|

|

|

Face of a yellow cat, high resolution, sitting on a park bench |  |

Stable Diffusion XL

Similar to Stable Diffusion, you will be able to use NeuronStableDiffusionXLPipeline API to export and run inference on Neuron devices with SDXL models.

>>> from optimum.neuron import NeuronStableDiffusionXLPipeline

>>> model_id = "stabilityai/stable-diffusion-xl-base-1.0"

>>> compiler_args = {"auto_cast": "matmul", "auto_cast_type": "bf16"}

>>> input_shapes = {"batch_size": 1, "height": 1024, "width": 1024}

>>> stable_diffusion_xl = NeuronStableDiffusionXLPipeline.from_pretrained(model_id, export=True, **compiler_args, **input_shapes)

# Save locally or upload to the HuggingFace Hub

>>> save_directory = "sd_neuron_xl/"

>>> stable_diffusion_xl.save_pretrained(save_directory)

>>> stable_diffusion_xl.push_to_hub(

... save_directory, repository_id="my-neuron-repo", use_auth_token=True

... )Now generate an image with a prompt on neuron:

>>> prompt = "Astronaut in a jungle, cold color palette, muted colors, detailed, 8k"

>>> image = stable_diffusion_xl(prompt).images[0]

Happy inference with Neuron! 🚀