Kandinsky 2.2

The Kandinsky 2.2 release includes robust new text-to-image models that support text-to-image generation, image-to-image generation, image interpolation, and text-guided image inpainting. The general workflow to perform these tasks using Kandinsky 2.2 is the same as in Kandinsky 2.1. First, you will need to use a prior pipeline to generate image embeddings based on your text prompt, and then use one of the image decoding pipelines to generate the output image. The only difference is that in Kandinsky 2.2, all of the decoding pipelines no longer accept the prompt input, and the image generation process is conditioned with only image_embeds and negative_image_embeds.

Same as with Kandinsky 2.1, the easiest way to perform text-to-image generation is to use the combined Kandinsky pipeline. This process is exactly the same as Kandinsky 2.1. All you need to do is to replace the Kandinsky 2.1 checkpoint with 2.2.

from diffusers import AutoPipelineForText2Image

import torch

pipe = AutoPipelineForText2Image.from_pretrained("kandinsky-community/kandinsky-2-2-decoder", torch_dtype=torch.float16)

pipe.enable_model_cpu_offload()

prompt = "A alien cheeseburger creature eating itself, claymation, cinematic, moody lighting"

negative_prompt = "low quality, bad quality"

image = pipe(prompt=prompt, negative_prompt=negative_prompt, prior_guidance_scale =1.0, height=768, width=768).images[0]Now, let’s look at an example where we take separate steps to run the prior pipeline and text-to-image pipeline. This way, we can understand what’s happening under the hood and how Kandinsky 2.2 differs from Kandinsky 2.1.

First, let’s create the prior pipeline and text-to-image pipeline with Kandinsky 2.2 checkpoints.

from diffusers import DiffusionPipeline

import torch

pipe_prior = DiffusionPipeline.from_pretrained("kandinsky-community/kandinsky-2-2-prior", torch_dtype=torch.float16)

pipe_prior.to("cuda")

t2i_pipe = DiffusionPipeline.from_pretrained("kandinsky-community/kandinsky-2-2-decoder", torch_dtype=torch.float16)

t2i_pipe.to("cuda")You can then use pipe_prior to generate image embeddings.

prompt = "portrait of a women, blue eyes, cinematic"

negative_prompt = "low quality, bad quality"

image_embeds, negative_image_embeds = pipe_prior(prompt, guidance_scale=1.0).to_tuple()Now you can pass these embeddings to the text-to-image pipeline. When using Kandinsky 2.2 you don’t need to pass the prompt (but you do with the previous version, Kandinsky 2.1).

image = t2i_pipe(image_embeds=image_embeds, negative_image_embeds=negative_image_embeds, height=768, width=768).images[

0

]

image.save("portrait.png")

We used the text-to-image pipeline as an example, but the same process applies to all decoding pipelines in Kandinsky 2.2. For more information, please refer to our API section for each pipeline.

Text-to-Image Generation with ControlNet Conditioning

In the following, we give a simple example of how to use KandinskyV22ControlnetPipeline to add control to the text-to-image generation with a depth image.

First, let’s take an image and extract its depth map.

from diffusers.utils import load_image

img = load_image(

"https://huggingface.co/datasets/hf-internal-testing/diffusers-images/resolve/main/kandinskyv22/cat.png"

).resize((768, 768))

We can use the depth-estimation pipeline from transformers to process the image and retrieve its depth map.

import torch

import numpy as np

from transformers import pipeline

from diffusers.utils import load_image

def make_hint(image, depth_estimator):

image = depth_estimator(image)["depth"]

image = np.array(image)

image = image[:, :, None]

image = np.concatenate([image, image, image], axis=2)

detected_map = torch.from_numpy(image).float() / 255.0

hint = detected_map.permute(2, 0, 1)

return hint

depth_estimator = pipeline("depth-estimation")

hint = make_hint(img, depth_estimator).unsqueeze(0).half().to("cuda")Now, we load the prior pipeline and the text-to-image controlnet pipeline

from diffusers import KandinskyV22PriorPipeline, KandinskyV22ControlnetPipeline

pipe_prior = KandinskyV22PriorPipeline.from_pretrained(

"kandinsky-community/kandinsky-2-2-prior", torch_dtype=torch.float16

)

pipe_prior = pipe_prior.to("cuda")

pipe = KandinskyV22ControlnetPipeline.from_pretrained(

"kandinsky-community/kandinsky-2-2-controlnet-depth", torch_dtype=torch.float16

)

pipe = pipe.to("cuda")We pass the prompt and negative prompt through the prior to generate image embeddings

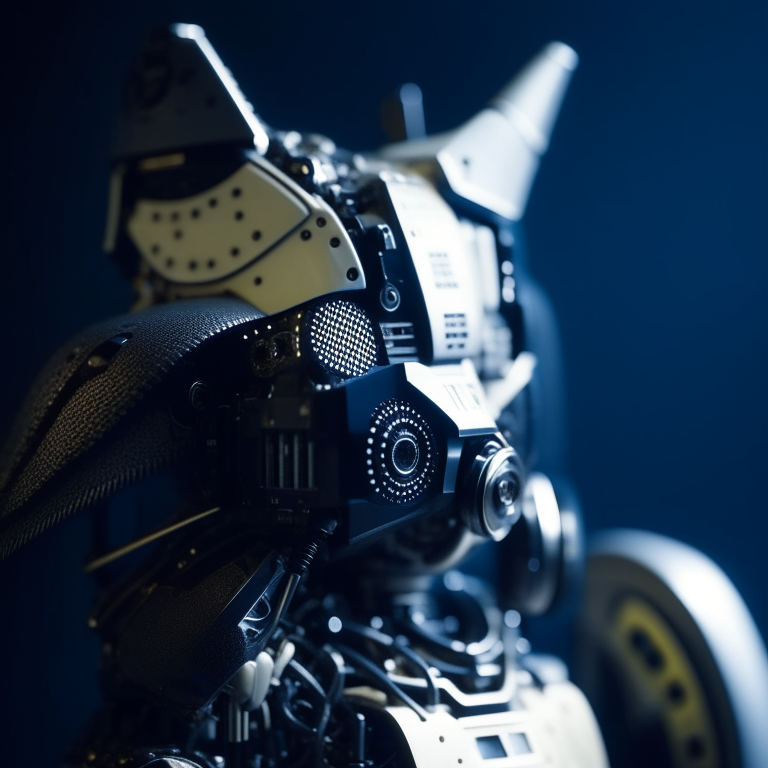

prompt = "A robot, 4k photo"

negative_prior_prompt = "lowres, text, error, cropped, worst quality, low quality, jpeg artifacts, ugly, duplicate, morbid, mutilated, out of frame, extra fingers, mutated hands, poorly drawn hands, poorly drawn face, mutation, deformed, blurry, dehydrated, bad anatomy, bad proportions, extra limbs, cloned face, disfigured, gross proportions, malformed limbs, missing arms, missing legs, extra arms, extra legs, fused fingers, too many fingers, long neck, username, watermark, signature"

generator = torch.Generator(device="cuda").manual_seed(43)

image_emb, zero_image_emb = pipe_prior(

prompt=prompt, negative_prompt=negative_prior_prompt, generator=generator

).to_tuple()Now we can pass the image embeddings and the depth image we extracted to the controlnet pipeline. With Kandinsky 2.2, only prior pipelines accept prompt input. You do not need to pass the prompt to the controlnet pipeline.

images = pipe(

image_embeds=image_emb,

negative_image_embeds=zero_image_emb,

hint=hint,

num_inference_steps=50,

generator=generator,

height=768,

width=768,

).images

images[0].save("robot_cat.png")The output image looks as follow:

Image-to-Image Generation with ControlNet Conditioning

Kandinsky 2.2 also includes a KandinskyV22ControlnetImg2ImgPipeline that will allow you to add control to the image generation process with both the image and its depth map. This pipeline works really well with KandinskyV22PriorEmb2EmbPipeline, which generates image embeddings based on both a text prompt and an image.

For our robot cat example, we will pass the prompt and cat image together to the prior pipeline to generate an image embedding. We will then use that image embedding and the depth map of the cat to further control the image generation process.

We can use the same cat image and its depth map from the last example.

import torch

import numpy as np

from diffusers import KandinskyV22PriorEmb2EmbPipeline, KandinskyV22ControlnetImg2ImgPipeline

from diffusers.utils import load_image

from transformers import pipeline

img = load_image(

"https://huggingface.co/datasets/hf-internal-testing/diffusers-images/resolve/main" "/kandinskyv22/cat.png"

).resize((768, 768))

def make_hint(image, depth_estimator):

image = depth_estimator(image)["depth"]

image = np.array(image)

image = image[:, :, None]

image = np.concatenate([image, image, image], axis=2)

detected_map = torch.from_numpy(image).float() / 255.0

hint = detected_map.permute(2, 0, 1)

return hint

depth_estimator = pipeline("depth-estimation")

hint = make_hint(img, depth_estimator).unsqueeze(0).half().to("cuda")

pipe_prior = KandinskyV22PriorEmb2EmbPipeline.from_pretrained(

"kandinsky-community/kandinsky-2-2-prior", torch_dtype=torch.float16

)

pipe_prior = pipe_prior.to("cuda")

pipe = KandinskyV22ControlnetImg2ImgPipeline.from_pretrained(

"kandinsky-community/kandinsky-2-2-controlnet-depth", torch_dtype=torch.float16

)

pipe = pipe.to("cuda")

prompt = "A robot, 4k photo"

negative_prior_prompt = "lowres, text, error, cropped, worst quality, low quality, jpeg artifacts, ugly, duplicate, morbid, mutilated, out of frame, extra fingers, mutated hands, poorly drawn hands, poorly drawn face, mutation, deformed, blurry, dehydrated, bad anatomy, bad proportions, extra limbs, cloned face, disfigured, gross proportions, malformed limbs, missing arms, missing legs, extra arms, extra legs, fused fingers, too many fingers, long neck, username, watermark, signature"

generator = torch.Generator(device="cuda").manual_seed(43)

# run prior pipeline

img_emb = pipe_prior(prompt=prompt, image=img, strength=0.85, generator=generator)

negative_emb = pipe_prior(prompt=negative_prior_prompt, image=img, strength=1, generator=generator)

# run controlnet img2img pipeline

images = pipe(

image=img,

strength=0.5,

image_embeds=img_emb.image_embeds,

negative_image_embeds=negative_emb.image_embeds,

hint=hint,

num_inference_steps=50,

generator=generator,

height=768,

width=768,

).images

images[0].save("robot_cat.png")Here is the output. Compared with the output from our text-to-image controlnet example, it kept a lot more cat facial details from the original image and worked into the robot style we asked for.

Optimization

Running Kandinsky in inference requires running both a first prior pipeline: KandinskyPriorPipeline and a second image decoding pipeline which is one of KandinskyPipeline, KandinskyImg2ImgPipeline, or KandinskyInpaintPipeline.

The bulk of the computation time will always be the second image decoding pipeline, so when looking into optimizing the model, one should look into the second image decoding pipeline.

When running with PyTorch < 2.0, we strongly recommend making use of xformers

to speed-up the optimization. This can be done by simply running:

from diffusers import DiffusionPipeline

import torch

t2i_pipe = DiffusionPipeline.from_pretrained("kandinsky-community/kandinsky-2-1", torch_dtype=torch.float16)

t2i_pipe.enable_xformers_memory_efficient_attention()When running on PyTorch >= 2.0, PyTorch’s SDPA attention will automatically be used. For more information on PyTorch’s SDPA, feel free to have a look at this blog post.

To have explicit control , you can also manually set the pipeline to use PyTorch’s 2.0 efficient attention:

from diffusers.models.attention_processor import AttnAddedKVProcessor2_0

t2i_pipe.unet.set_attn_processor(AttnAddedKVProcessor2_0())The slowest and most memory intense attention processor is the default AttnAddedKVProcessor processor.

We do not recommend using it except for testing purposes or cases where very high determistic behaviour is desired.

You can set it with:

from diffusers.models.attention_processor import AttnAddedKVProcessor

t2i_pipe.unet.set_attn_processor(AttnAddedKVProcessor())With PyTorch >= 2.0, you can also use Kandinsky with torch.compile which depending

on your hardware can signficantly speed-up your inference time once the model is compiled.

To use Kandinsksy with torch.compile, you can do:

t2i_pipe.unet.to(memory_format=torch.channels_last)

t2i_pipe.unet = torch.compile(t2i_pipe.unet, mode="reduce-overhead", fullgraph=True)After compilation you should see a very fast inference time. For more information, feel free to have a look at Our PyTorch 2.0 benchmark.

To generate images directly from a single pipeline, you can use KandinskyV22CombinedPipeline, KandinskyV22Img2ImgCombinedPipeline, KandinskyV22InpaintCombinedPipeline. These combined pipelines wrap the KandinskyV22PriorPipeline and KandinskyV22Pipeline, KandinskyV22Img2ImgPipeline, KandinskyV22InpaintPipeline respectively into a single pipeline for a simpler user experience

Available Pipelines:

| Pipeline | Tasks |

|---|---|

| pipeline_kandinsky2_2.py | Text-to-Image Generation |

| pipeline_kandinsky2_2_combined.py | End-to-end Text-to-Image, image-to-image, Inpainting Generation |

| pipeline_kandinsky2_2_inpaint.py | Image-Guided Image Generation |

| pipeline_kandinsky2_2_img2img.py | Image-Guided Image Generation |

| pipeline_kandinsky2_2_controlnet.py | Image-Guided Image Generation |

| pipeline_kandinsky2_2_controlnet_img2img.py | Image-Guided Image Generation |

KandinskyV22Pipeline

class diffusers.KandinskyV22Pipeline

< source >( unet: UNet2DConditionModel scheduler: DDPMScheduler movq: VQModel )

Parameters

-

scheduler (Union[

DDIMScheduler,DDPMScheduler]) — A scheduler to be used in combination withunetto generate image latents. - unet (UNet2DConditionModel) — Conditional U-Net architecture to denoise the image embedding.

- movq (VQModel) — MoVQ Decoder to generate the image from the latents.

Pipeline for text-to-image generation using Kandinsky

This model inherits from DiffusionPipeline. Check the superclass documentation for the generic methods the library implements for all the pipelines (such as downloading or saving, running on a particular device, etc.)

__call__

< source >(

image_embeds: typing.Union[torch.FloatTensor, typing.List[torch.FloatTensor]]

negative_image_embeds: typing.Union[torch.FloatTensor, typing.List[torch.FloatTensor]]

height: int = 512

width: int = 512

num_inference_steps: int = 100

guidance_scale: float = 4.0

num_images_per_prompt: int = 1

generator: typing.Union[torch._C.Generator, typing.List[torch._C.Generator], NoneType] = None

latents: typing.Optional[torch.FloatTensor] = None

output_type: typing.Optional[str] = 'pil'

callback: typing.Union[typing.Callable[[int, int, torch.FloatTensor], NoneType], NoneType] = None

callback_steps: int = 1

return_dict: bool = True

)

→

ImagePipelineOutput or tuple

Parameters

-

image_embeds (

torch.FloatTensororList[torch.FloatTensor]) — The clip image embeddings for text prompt, that will be used to condition the image generation. -

negative_image_embeds (

torch.FloatTensororList[torch.FloatTensor]) — The clip image embeddings for negative text prompt, will be used to condition the image generation. -

height (

int, optional, defaults to 512) — The height in pixels of the generated image. -

width (

int, optional, defaults to 512) — The width in pixels of the generated image. -

num_inference_steps (

int, optional, defaults to 100) — The number of denoising steps. More denoising steps usually lead to a higher quality image at the expense of slower inference. -

guidance_scale (

float, optional, defaults to 4.0) — Guidance scale as defined in Classifier-Free Diffusion Guidance.guidance_scaleis defined aswof equation 2. of Imagen Paper. Guidance scale is enabled by settingguidance_scale > 1. Higher guidance scale encourages to generate images that are closely linked to the textprompt, usually at the expense of lower image quality. -

num_images_per_prompt (

int, optional, defaults to 1) — The number of images to generate per prompt. -

generator (

torch.GeneratororList[torch.Generator], optional) — One or a list of torch generator(s) to make generation deterministic. -

latents (

torch.FloatTensor, optional) — Pre-generated noisy latents, sampled from a Gaussian distribution, to be used as inputs for image generation. Can be used to tweak the same generation with different prompts. If not provided, a latents tensor will ge generated by sampling using the supplied randomgenerator. -

output_type (

str, optional, defaults to"pil") — The output format of the generate image. Choose between:"pil"(PIL.Image.Image),"np"(np.array) or"pt"(torch.Tensor). -

callback (

Callable, optional) — A function that calls everycallback_stepssteps during inference. The function is called with the following arguments:callback(step: int, timestep: int, latents: torch.FloatTensor). -

callback_steps (

int, optional, defaults to 1) — The frequency at which thecallbackfunction is called. If not specified, the callback is called at every step. -

return_dict (

bool, optional, defaults toTrue) — Whether or not to return a ImagePipelineOutput instead of a plain tuple.

Returns

ImagePipelineOutput or tuple

Function invoked when calling the pipeline for generation.

Examples:

>>> from diffusers import KandinskyV22Pipeline, KandinskyV22PriorPipeline

>>> import torch

>>> pipe_prior = KandinskyV22PriorPipeline.from_pretrained("kandinsky-community/kandinsky-2-2-prior")

>>> pipe_prior.to("cuda")

>>> prompt = "red cat, 4k photo"

>>> out = pipe_prior(prompt)

>>> image_emb = out.image_embeds

>>> zero_image_emb = out.negative_image_embeds

>>> pipe = KandinskyV22Pipeline.from_pretrained("kandinsky-community/kandinsky-2-2-decoder")

>>> pipe.to("cuda")

>>> image = pipe(

... image_embeds=image_emb,

... negative_image_embeds=zero_image_emb,

... height=768,

... width=768,

... num_inference_steps=50,

... ).images

>>> image[0].save("cat.png")KandinskyV22ControlnetPipeline

class diffusers.KandinskyV22ControlnetPipeline

< source >( unet: UNet2DConditionModel scheduler: DDPMScheduler movq: VQModel )

Parameters

-

scheduler (DDIMScheduler) —

A scheduler to be used in combination with

unetto generate image latents. - unet (UNet2DConditionModel) — Conditional U-Net architecture to denoise the image embedding.

- movq (VQModel) — MoVQ Decoder to generate the image from the latents.

Pipeline for text-to-image generation using Kandinsky

This model inherits from DiffusionPipeline. Check the superclass documentation for the generic methods the library implements for all the pipelines (such as downloading or saving, running on a particular device, etc.)

__call__

< source >(

image_embeds: typing.Union[torch.FloatTensor, typing.List[torch.FloatTensor]]

negative_image_embeds: typing.Union[torch.FloatTensor, typing.List[torch.FloatTensor]]

hint: FloatTensor

height: int = 512

width: int = 512

num_inference_steps: int = 100

guidance_scale: float = 4.0

num_images_per_prompt: int = 1

generator: typing.Union[torch._C.Generator, typing.List[torch._C.Generator], NoneType] = None

latents: typing.Optional[torch.FloatTensor] = None

output_type: typing.Optional[str] = 'pil'

callback: typing.Union[typing.Callable[[int, int, torch.FloatTensor], NoneType], NoneType] = None

callback_steps: int = 1

return_dict: bool = True

)

→

ImagePipelineOutput or tuple

Parameters

-

prompt (

strorList[str]) — The prompt or prompts to guide the image generation. -

hint (

torch.FloatTensor) — The controlnet condition. -

image_embeds (

torch.FloatTensororList[torch.FloatTensor]) — The clip image embeddings for text prompt, that will be used to condition the image generation. -

negative_image_embeds (

torch.FloatTensororList[torch.FloatTensor]) — The clip image embeddings for negative text prompt, will be used to condition the image generation. -

negative_prompt (

strorList[str], optional) — The prompt or prompts not to guide the image generation. Ignored when not using guidance (i.e., ignored ifguidance_scaleis less than1). -

height (

int, optional, defaults to 512) — The height in pixels of the generated image. -

width (

int, optional, defaults to 512) — The width in pixels of the generated image. -

num_inference_steps (

int, optional, defaults to 100) — The number of denoising steps. More denoising steps usually lead to a higher quality image at the expense of slower inference. -

guidance_scale (

float, optional, defaults to 4.0) — Guidance scale as defined in Classifier-Free Diffusion Guidance.guidance_scaleis defined aswof equation 2. of Imagen Paper. Guidance scale is enabled by settingguidance_scale > 1. Higher guidance scale encourages to generate images that are closely linked to the textprompt, usually at the expense of lower image quality. -

num_images_per_prompt (

int, optional, defaults to 1) — The number of images to generate per prompt. -

generator (

torch.GeneratororList[torch.Generator], optional) — One or a list of torch generator(s) to make generation deterministic. -

latents (

torch.FloatTensor, optional) — Pre-generated noisy latents, sampled from a Gaussian distribution, to be used as inputs for image generation. Can be used to tweak the same generation with different prompts. If not provided, a latents tensor will ge generated by sampling using the supplied randomgenerator. -

output_type (

str, optional, defaults to"pil") — The output format of the generate image. Choose between:"pil"(PIL.Image.Image),"np"(np.array) or"pt"(torch.Tensor). -

callback (

Callable, optional) — A function that calls everycallback_stepssteps during inference. The function is called with the following arguments:callback(step: int, timestep: int, latents: torch.FloatTensor). -

callback_steps (

int, optional, defaults to 1) — The frequency at which thecallbackfunction is called. If not specified, the callback is called at every step. -

return_dict (

bool, optional, defaults toTrue) — Whether or not to return a ImagePipelineOutput instead of a plain tuple.

Returns

ImagePipelineOutput or tuple

Function invoked when calling the pipeline for generation.

Examples:

KandinskyV22ControlnetImg2ImgPipeline

class diffusers.KandinskyV22ControlnetImg2ImgPipeline

< source >( unet: UNet2DConditionModel scheduler: DDPMScheduler movq: VQModel )

Parameters

-

scheduler (DDIMScheduler) —

A scheduler to be used in combination with

unetto generate image latents. - unet (UNet2DConditionModel) — Conditional U-Net architecture to denoise the image embedding.

- movq (VQModel) — MoVQ Decoder to generate the image from the latents.

Pipeline for image-to-image generation using Kandinsky

This model inherits from DiffusionPipeline. Check the superclass documentation for the generic methods the library implements for all the pipelines (such as downloading or saving, running on a particular device, etc.)

__call__

< source >(

image_embeds: typing.Union[torch.FloatTensor, typing.List[torch.FloatTensor]]

image: typing.Union[torch.FloatTensor, PIL.Image.Image, typing.List[torch.FloatTensor], typing.List[PIL.Image.Image]]

negative_image_embeds: typing.Union[torch.FloatTensor, typing.List[torch.FloatTensor]]

hint: FloatTensor

height: int = 512

width: int = 512

num_inference_steps: int = 100

guidance_scale: float = 4.0

strength: float = 0.3

num_images_per_prompt: int = 1

generator: typing.Union[torch._C.Generator, typing.List[torch._C.Generator], NoneType] = None

output_type: typing.Optional[str] = 'pil'

callback: typing.Union[typing.Callable[[int, int, torch.FloatTensor], NoneType], NoneType] = None

callback_steps: int = 1

return_dict: bool = True

)

→

ImagePipelineOutput or tuple

Parameters

-

image_embeds (

torch.FloatTensororList[torch.FloatTensor]) — The clip image embeddings for text prompt, that will be used to condition the image generation. -

image (

torch.FloatTensor,PIL.Image.Image,np.ndarray,List[torch.FloatTensor],List[PIL.Image.Image], orList[np.ndarray]) —Image, or tensor representing an image batch, that will be used as the starting point for the process. Can also accept image latents asimage, if passing latents directly, it will not be encoded again. -

strength (

float, optional, defaults to 0.8) — Conceptually, indicates how much to transform the referenceimage. Must be between 0 and 1.imagewill be used as a starting point, adding more noise to it the larger thestrength. The number of denoising steps depends on the amount of noise initially added. Whenstrengthis 1, added noise will be maximum and the denoising process will run for the full number of iterations specified innum_inference_steps. A value of 1, therefore, essentially ignoresimage. -

hint (

torch.FloatTensor) — The controlnet condition. -

negative_image_embeds (

torch.FloatTensororList[torch.FloatTensor]) — The clip image embeddings for negative text prompt, will be used to condition the image generation. -

height (

int, optional, defaults to 512) — The height in pixels of the generated image. -

width (

int, optional, defaults to 512) — The width in pixels of the generated image. -

num_inference_steps (

int, optional, defaults to 100) — The number of denoising steps. More denoising steps usually lead to a higher quality image at the expense of slower inference. -

guidance_scale (

float, optional, defaults to 4.0) — Guidance scale as defined in Classifier-Free Diffusion Guidance.guidance_scaleis defined aswof equation 2. of Imagen Paper. Guidance scale is enabled by settingguidance_scale > 1. Higher guidance scale encourages to generate images that are closely linked to the textprompt, usually at the expense of lower image quality. -

num_images_per_prompt (

int, optional, defaults to 1) — The number of images to generate per prompt. -

generator (

torch.GeneratororList[torch.Generator], optional) — One or a list of torch generator(s) to make generation deterministic. -

output_type (

str, optional, defaults to"pil") — The output format of the generate image. Choose between:"pil"(PIL.Image.Image),"np"(np.array) or"pt"(torch.Tensor). -

callback (

Callable, optional) — A function that calls everycallback_stepssteps during inference. The function is called with the following arguments:callback(step: int, timestep: int, latents: torch.FloatTensor). -

callback_steps (

int, optional, defaults to 1) — The frequency at which thecallbackfunction is called. If not specified, the callback is called at every step. -

return_dict (

bool, optional, defaults toTrue) — Whether or not to return a ImagePipelineOutput instead of a plain tuple.

Returns

ImagePipelineOutput or tuple

Function invoked when calling the pipeline for generation.

Examples:

KandinskyV22Img2ImgPipeline

class diffusers.KandinskyV22Img2ImgPipeline

< source >( unet: UNet2DConditionModel scheduler: DDPMScheduler movq: VQModel )

Parameters

-

scheduler (DDIMScheduler) —

A scheduler to be used in combination with

unetto generate image latents. - unet (UNet2DConditionModel) — Conditional U-Net architecture to denoise the image embedding.

- movq (VQModel) — MoVQ Decoder to generate the image from the latents.

Pipeline for image-to-image generation using Kandinsky

This model inherits from DiffusionPipeline. Check the superclass documentation for the generic methods the library implements for all the pipelines (such as downloading or saving, running on a particular device, etc.)

__call__

< source >(

image_embeds: typing.Union[torch.FloatTensor, typing.List[torch.FloatTensor]]

image: typing.Union[torch.FloatTensor, PIL.Image.Image, typing.List[torch.FloatTensor], typing.List[PIL.Image.Image]]

negative_image_embeds: typing.Union[torch.FloatTensor, typing.List[torch.FloatTensor]]

height: int = 512

width: int = 512

num_inference_steps: int = 100

guidance_scale: float = 4.0

strength: float = 0.3

num_images_per_prompt: int = 1

generator: typing.Union[torch._C.Generator, typing.List[torch._C.Generator], NoneType] = None

output_type: typing.Optional[str] = 'pil'

callback: typing.Union[typing.Callable[[int, int, torch.FloatTensor], NoneType], NoneType] = None

callback_steps: int = 1

return_dict: bool = True

)

→

ImagePipelineOutput or tuple

Parameters

-

image_embeds (

torch.FloatTensororList[torch.FloatTensor]) — The clip image embeddings for text prompt, that will be used to condition the image generation. -

image (

torch.FloatTensor,PIL.Image.Image,np.ndarray,List[torch.FloatTensor],List[PIL.Image.Image], orList[np.ndarray]) —Image, or tensor representing an image batch, that will be used as the starting point for the process. Can also accept image latents asimage, if passing latents directly, it will not be encoded again. -

strength (

float, optional, defaults to 0.8) — Conceptually, indicates how much to transform the referenceimage. Must be between 0 and 1.imagewill be used as a starting point, adding more noise to it the larger thestrength. The number of denoising steps depends on the amount of noise initially added. Whenstrengthis 1, added noise will be maximum and the denoising process will run for the full number of iterations specified innum_inference_steps. A value of 1, therefore, essentially ignoresimage. -

negative_image_embeds (

torch.FloatTensororList[torch.FloatTensor]) — The clip image embeddings for negative text prompt, will be used to condition the image generation. -

height (

int, optional, defaults to 512) — The height in pixels of the generated image. -

width (

int, optional, defaults to 512) — The width in pixels of the generated image. -

num_inference_steps (

int, optional, defaults to 100) — The number of denoising steps. More denoising steps usually lead to a higher quality image at the expense of slower inference. -

guidance_scale (

float, optional, defaults to 4.0) — Guidance scale as defined in Classifier-Free Diffusion Guidance.guidance_scaleis defined aswof equation 2. of Imagen Paper. Guidance scale is enabled by settingguidance_scale > 1. Higher guidance scale encourages to generate images that are closely linked to the textprompt, usually at the expense of lower image quality. -

num_images_per_prompt (

int, optional, defaults to 1) — The number of images to generate per prompt. -

generator (

torch.GeneratororList[torch.Generator], optional) — One or a list of torch generator(s) to make generation deterministic. -

output_type (

str, optional, defaults to"pil") — The output format of the generate image. Choose between:"pil"(PIL.Image.Image),"np"(np.array) or"pt"(torch.Tensor). -

callback (

Callable, optional) — A function that calls everycallback_stepssteps during inference. The function is called with the following arguments:callback(step: int, timestep: int, latents: torch.FloatTensor). -

callback_steps (

int, optional, defaults to 1) — The frequency at which thecallbackfunction is called. If not specified, the callback is called at every step. -

return_dict (

bool, optional, defaults toTrue) — Whether or not to return a ImagePipelineOutput instead of a plain tuple.

Returns

ImagePipelineOutput or tuple

Function invoked when calling the pipeline for generation.

Examples:

KandinskyV22InpaintPipeline

class diffusers.KandinskyV22InpaintPipeline

< source >( unet: UNet2DConditionModel scheduler: DDPMScheduler movq: VQModel )

Parameters

-

scheduler (DDIMScheduler) —

A scheduler to be used in combination with

unetto generate image latents. - unet (UNet2DConditionModel) — Conditional U-Net architecture to denoise the image embedding.

- movq (VQModel) — MoVQ Decoder to generate the image from the latents.

Pipeline for text-guided image inpainting using Kandinsky2.1

This model inherits from DiffusionPipeline. Check the superclass documentation for the generic methods the library implements for all the pipelines (such as downloading or saving, running on a particular device, etc.)

__call__

< source >(

image_embeds: typing.Union[torch.FloatTensor, typing.List[torch.FloatTensor]]

image: typing.Union[torch.FloatTensor, PIL.Image.Image]

mask_image: typing.Union[torch.FloatTensor, PIL.Image.Image, numpy.ndarray]

negative_image_embeds: typing.Union[torch.FloatTensor, typing.List[torch.FloatTensor]]

height: int = 512

width: int = 512

num_inference_steps: int = 100

guidance_scale: float = 4.0

num_images_per_prompt: int = 1

generator: typing.Union[torch._C.Generator, typing.List[torch._C.Generator], NoneType] = None

latents: typing.Optional[torch.FloatTensor] = None

output_type: typing.Optional[str] = 'pil'

callback: typing.Union[typing.Callable[[int, int, torch.FloatTensor], NoneType], NoneType] = None

callback_steps: int = 1

return_dict: bool = True

)

→

ImagePipelineOutput or tuple

Parameters

-

image_embeds (

torch.FloatTensororList[torch.FloatTensor]) — The clip image embeddings for text prompt, that will be used to condition the image generation. -

image (

PIL.Image.Image) —Image, or tensor representing an image batch which will be inpainted, i.e. parts of the image will be masked out withmask_imageand repainted according toprompt. -

mask_image (

np.array) — Tensor representing an image batch, to maskimage. White pixels in the mask will be repainted, while black pixels will be preserved. Ifmask_imageis a PIL image, it will be converted to a single channel (luminance) before use. If it’s a tensor, it should contain one color channel (L) instead of 3, so the expected shape would be(B, H, W, 1). -

negative_image_embeds (

torch.FloatTensororList[torch.FloatTensor]) — The clip image embeddings for negative text prompt, will be used to condition the image generation. -

height (

int, optional, defaults to 512) — The height in pixels of the generated image. -

width (

int, optional, defaults to 512) — The width in pixels of the generated image. -

num_inference_steps (

int, optional, defaults to 100) — The number of denoising steps. More denoising steps usually lead to a higher quality image at the expense of slower inference. -

guidance_scale (

float, optional, defaults to 4.0) — Guidance scale as defined in Classifier-Free Diffusion Guidance.guidance_scaleis defined aswof equation 2. of Imagen Paper. Guidance scale is enabled by settingguidance_scale > 1. Higher guidance scale encourages to generate images that are closely linked to the textprompt, usually at the expense of lower image quality. -

num_images_per_prompt (

int, optional, defaults to 1) — The number of images to generate per prompt. -

generator (

torch.GeneratororList[torch.Generator], optional) — One or a list of torch generator(s) to make generation deterministic. -

latents (

torch.FloatTensor, optional) — Pre-generated noisy latents, sampled from a Gaussian distribution, to be used as inputs for image generation. Can be used to tweak the same generation with different prompts. If not provided, a latents tensor will ge generated by sampling using the supplied randomgenerator. -

output_type (

str, optional, defaults to"pil") — The output format of the generate image. Choose between:"pil"(PIL.Image.Image),"np"(np.array) or"pt"(torch.Tensor). -

callback (

Callable, optional) — A function that calls everycallback_stepssteps during inference. The function is called with the following arguments:callback(step: int, timestep: int, latents: torch.FloatTensor). -

callback_steps (

int, optional, defaults to 1) — The frequency at which thecallbackfunction is called. If not specified, the callback is called at every step. -

return_dict (

bool, optional, defaults toTrue) — Whether or not to return a ImagePipelineOutput instead of a plain tuple.

Returns

ImagePipelineOutput or tuple

Function invoked when calling the pipeline for generation.

Examples:

KandinskyV22PriorPipeline

class diffusers.KandinskyV22PriorPipeline

< source >( prior: PriorTransformer image_encoder: CLIPVisionModelWithProjection text_encoder: CLIPTextModelWithProjection tokenizer: CLIPTokenizer scheduler: UnCLIPScheduler image_processor: CLIPImageProcessor )

Parameters

- prior (PriorTransformer) — The canonincal unCLIP prior to approximate the image embedding from the text embedding.

-

image_encoder (

CLIPVisionModelWithProjection) — Frozen image-encoder. -

text_encoder (

CLIPTextModelWithProjection) — Frozen text-encoder. -

tokenizer (

CLIPTokenizer) — Tokenizer of class CLIPTokenizer. -

scheduler (

UnCLIPScheduler) — A scheduler to be used in combination withpriorto generate image embedding. -

image_processor (

CLIPImageProcessor) — A image_processor to be used to preprocess image from clip.

Pipeline for generating image prior for Kandinsky

This model inherits from DiffusionPipeline. Check the superclass documentation for the generic methods the library implements for all the pipelines (such as downloading or saving, running on a particular device, etc.)

__call__

< source >(

prompt: typing.Union[str, typing.List[str]]

negative_prompt: typing.Union[str, typing.List[str], NoneType] = None

num_images_per_prompt: int = 1

num_inference_steps: int = 25

generator: typing.Union[torch._C.Generator, typing.List[torch._C.Generator], NoneType] = None

latents: typing.Optional[torch.FloatTensor] = None

guidance_scale: float = 4.0

output_type: typing.Optional[str] = 'pt'

return_dict: bool = True

)

→

KandinskyPriorPipelineOutput or tuple

Parameters

-

prompt (

strorList[str]) — The prompt or prompts to guide the image generation. -

negative_prompt (

strorList[str], optional) — The prompt or prompts not to guide the image generation. Ignored when not using guidance (i.e., ignored ifguidance_scaleis less than1). -

num_images_per_prompt (

int, optional, defaults to 1) — The number of images to generate per prompt. -

num_inference_steps (

int, optional, defaults to 100) — The number of denoising steps. More denoising steps usually lead to a higher quality image at the expense of slower inference. -

generator (

torch.GeneratororList[torch.Generator], optional) — One or a list of torch generator(s) to make generation deterministic. -

latents (

torch.FloatTensor, optional) — Pre-generated noisy latents, sampled from a Gaussian distribution, to be used as inputs for image generation. Can be used to tweak the same generation with different prompts. If not provided, a latents tensor will ge generated by sampling using the supplied randomgenerator. -

guidance_scale (

float, optional, defaults to 4.0) — Guidance scale as defined in Classifier-Free Diffusion Guidance.guidance_scaleis defined aswof equation 2. of Imagen Paper. Guidance scale is enabled by settingguidance_scale > 1. Higher guidance scale encourages to generate images that are closely linked to the textprompt, usually at the expense of lower image quality. -

output_type (

str, optional, defaults to"pt") — The output format of the generate image. Choose between:"np"(np.array) or"pt"(torch.Tensor). -

return_dict (

bool, optional, defaults toTrue) — Whether or not to return a ImagePipelineOutput instead of a plain tuple.

Returns

KandinskyPriorPipelineOutput or tuple

Function invoked when calling the pipeline for generation.

Examples:

>>> from diffusers import KandinskyV22Pipeline, KandinskyV22PriorPipeline

>>> import torch

>>> pipe_prior = KandinskyV22PriorPipeline.from_pretrained("kandinsky-community/kandinsky-2-2-prior")

>>> pipe_prior.to("cuda")

>>> prompt = "red cat, 4k photo"

>>> image_emb, negative_image_emb = pipe_prior(prompt).to_tuple()

>>> pipe = KandinskyV22Pipeline.from_pretrained("kandinsky-community/kandinsky-2-2-decoder")

>>> pipe.to("cuda")

>>> image = pipe(

... image_embeds=image_emb,

... negative_image_embeds=negative_image_emb,

... height=768,

... width=768,

... num_inference_steps=50,

... ).images

>>> image[0].save("cat.png")interpolate

< source >(

images_and_prompts: typing.List[typing.Union[str, PIL.Image.Image, torch.FloatTensor]]

weights: typing.List[float]

num_images_per_prompt: int = 1

num_inference_steps: int = 25

generator: typing.Union[torch._C.Generator, typing.List[torch._C.Generator], NoneType] = None

latents: typing.Optional[torch.FloatTensor] = None

negative_prior_prompt: typing.Optional[str] = None

negative_prompt: str = ''

guidance_scale: float = 4.0

device = None

)

→

KandinskyPriorPipelineOutput or tuple

Parameters

-

images_and_prompts (

List[Union[str, PIL.Image.Image, torch.FloatTensor]]) — list of prompts and images to guide the image generation. weights — (List[float]): list of weights for each condition inimages_and_prompts -

num_images_per_prompt (

int, optional, defaults to 1) — The number of images to generate per prompt. -

num_inference_steps (

int, optional, defaults to 100) — The number of denoising steps. More denoising steps usually lead to a higher quality image at the expense of slower inference. -

generator (

torch.GeneratororList[torch.Generator], optional) — One or a list of torch generator(s) to make generation deterministic. -

latents (

torch.FloatTensor, optional) — Pre-generated noisy latents, sampled from a Gaussian distribution, to be used as inputs for image generation. Can be used to tweak the same generation with different prompts. If not provided, a latents tensor will ge generated by sampling using the supplied randomgenerator. -

negative_prior_prompt (

str, optional) — The prompt not to guide the prior diffusion process. Ignored when not using guidance (i.e., ignored ifguidance_scaleis less than1). -

negative_prompt (

strorList[str], optional) — The prompt not to guide the image generation. Ignored when not using guidance (i.e., ignored ifguidance_scaleis less than1). -

guidance_scale (

float, optional, defaults to 4.0) — Guidance scale as defined in Classifier-Free Diffusion Guidance.guidance_scaleis defined aswof equation 2. of Imagen Paper. Guidance scale is enabled by settingguidance_scale > 1. Higher guidance scale encourages to generate images that are closely linked to the textprompt, usually at the expense of lower image quality.

Returns

KandinskyPriorPipelineOutput or tuple

Function invoked when using the prior pipeline for interpolation.

Examples:

>>> from diffusers import KandinskyV22PriorPipeline, KandinskyV22Pipeline

>>> from diffusers.utils import load_image

>>> import PIL

>>> import torch

>>> from torchvision import transforms

>>> pipe_prior = KandinskyV22PriorPipeline.from_pretrained(

... "kandinsky-community/kandinsky-2-2-prior", torch_dtype=torch.float16

... )

>>> pipe_prior.to("cuda")

>>> img1 = load_image(

... "https://huggingface.co/datasets/hf-internal-testing/diffusers-images/resolve/main"

... "/kandinsky/cat.png"

... )

>>> img2 = load_image(

... "https://huggingface.co/datasets/hf-internal-testing/diffusers-images/resolve/main"

... "/kandinsky/starry_night.jpeg"

... )

>>> images_texts = ["a cat", img1, img2]

>>> weights = [0.3, 0.3, 0.4]

>>> out = pipe_prior.interpolate(images_texts, weights)

>>> pipe = KandinskyV22Pipeline.from_pretrained(

... "kandinsky-community/kandinsky-2-2-decoder", torch_dtype=torch.float16

... )

>>> pipe.to("cuda")

>>> image = pipe(

... image_embeds=out.image_embeds,

... negative_image_embeds=out.negative_image_embeds,

... height=768,

... width=768,

... num_inference_steps=50,

... ).images[0]

>>> image.save("starry_cat.png")KandinskyV22PriorEmb2EmbPipeline

class diffusers.KandinskyV22PriorEmb2EmbPipeline

< source >( prior: PriorTransformer image_encoder: CLIPVisionModelWithProjection text_encoder: CLIPTextModelWithProjection tokenizer: CLIPTokenizer scheduler: UnCLIPScheduler image_processor: CLIPImageProcessor )

Parameters

- prior (PriorTransformer) — The canonincal unCLIP prior to approximate the image embedding from the text embedding.

-

image_encoder (

CLIPVisionModelWithProjection) — Frozen image-encoder. -

text_encoder (

CLIPTextModelWithProjection) — Frozen text-encoder. -

tokenizer (

CLIPTokenizer) — Tokenizer of class CLIPTokenizer. -

scheduler (

UnCLIPScheduler) — A scheduler to be used in combination withpriorto generate image embedding.

Pipeline for generating image prior for Kandinsky

This model inherits from DiffusionPipeline. Check the superclass documentation for the generic methods the library implements for all the pipelines (such as downloading or saving, running on a particular device, etc.)

__call__

< source >(

prompt: typing.Union[str, typing.List[str]]

image: typing.Union[torch.Tensor, typing.List[torch.Tensor], PIL.Image.Image, typing.List[PIL.Image.Image]]

strength: float = 0.3

negative_prompt: typing.Union[str, typing.List[str], NoneType] = None

num_images_per_prompt: int = 1

num_inference_steps: int = 25

generator: typing.Union[torch._C.Generator, typing.List[torch._C.Generator], NoneType] = None

guidance_scale: float = 4.0

output_type: typing.Optional[str] = 'pt'

return_dict: bool = True

)

→

KandinskyPriorPipelineOutput or tuple

Parameters

-

prompt (

strorList[str]) — The prompt or prompts to guide the image generation. -

strength (

float, optional, defaults to 0.8) — Conceptually, indicates how much to transform the referenceemb. Must be between 0 and 1.imagewill be used as a starting point, adding more noise to it the larger thestrength. The number of denoising steps depends on the amount of noise initially added. -

emb (

torch.FloatTensor) — The image embedding. -

negative_prompt (

strorList[str], optional) — The prompt or prompts not to guide the image generation. Ignored when not using guidance (i.e., ignored ifguidance_scaleis less than1). -

num_images_per_prompt (

int, optional, defaults to 1) — The number of images to generate per prompt. -

num_inference_steps (

int, optional, defaults to 100) — The number of denoising steps. More denoising steps usually lead to a higher quality image at the expense of slower inference. -

generator (

torch.GeneratororList[torch.Generator], optional) — One or a list of torch generator(s) to make generation deterministic. -

guidance_scale (

float, optional, defaults to 4.0) — Guidance scale as defined in Classifier-Free Diffusion Guidance.guidance_scaleis defined aswof equation 2. of Imagen Paper. Guidance scale is enabled by settingguidance_scale > 1. Higher guidance scale encourages to generate images that are closely linked to the textprompt, usually at the expense of lower image quality. -

output_type (

str, optional, defaults to"pt") — The output format of the generate image. Choose between:"np"(np.array) or"pt"(torch.Tensor). -

return_dict (

bool, optional, defaults toTrue) — Whether or not to return a ImagePipelineOutput instead of a plain tuple.

Returns

KandinskyPriorPipelineOutput or tuple

Function invoked when calling the pipeline for generation.

Examples:

>>> from diffusers import KandinskyV22Pipeline, KandinskyV22PriorEmb2EmbPipeline

>>> import torch

>>> pipe_prior = KandinskyPriorPipeline.from_pretrained(

... "kandinsky-community/kandinsky-2-2-prior", torch_dtype=torch.float16

... )

>>> pipe_prior.to("cuda")

>>> prompt = "red cat, 4k photo"

>>> img = load_image(

... "https://huggingface.co/datasets/hf-internal-testing/diffusers-images/resolve/main"

... "/kandinsky/cat.png"

... )

>>> image_emb, nagative_image_emb = pipe_prior(prompt, image=img, strength=0.2).to_tuple()

>>> pipe = KandinskyPipeline.from_pretrained(

... "kandinsky-community/kandinsky-2-2-decoder, torch_dtype=torch.float16"

... )

>>> pipe.to("cuda")

>>> image = pipe(

... image_embeds=image_emb,

... negative_image_embeds=negative_image_emb,

... height=768,

... width=768,

... num_inference_steps=100,

... ).images

>>> image[0].save("cat.png")interpolate

< source >(

images_and_prompts: typing.List[typing.Union[str, PIL.Image.Image, torch.FloatTensor]]

weights: typing.List[float]

num_images_per_prompt: int = 1

num_inference_steps: int = 25

generator: typing.Union[torch._C.Generator, typing.List[torch._C.Generator], NoneType] = None

latents: typing.Optional[torch.FloatTensor] = None

negative_prior_prompt: typing.Optional[str] = None

negative_prompt: str = ''

guidance_scale: float = 4.0

device = None

)

→

KandinskyPriorPipelineOutput or tuple

Parameters

-

images_and_prompts (

List[Union[str, PIL.Image.Image, torch.FloatTensor]]) — list of prompts and images to guide the image generation. weights — (List[float]): list of weights for each condition inimages_and_prompts -

num_images_per_prompt (

int, optional, defaults to 1) — The number of images to generate per prompt. -

num_inference_steps (

int, optional, defaults to 100) — The number of denoising steps. More denoising steps usually lead to a higher quality image at the expense of slower inference. -

generator (

torch.GeneratororList[torch.Generator], optional) — One or a list of torch generator(s) to make generation deterministic. -

latents (

torch.FloatTensor, optional) — Pre-generated noisy latents, sampled from a Gaussian distribution, to be used as inputs for image generation. Can be used to tweak the same generation with different prompts. If not provided, a latents tensor will ge generated by sampling using the supplied randomgenerator. -

negative_prior_prompt (

str, optional) — The prompt not to guide the prior diffusion process. Ignored when not using guidance (i.e., ignored ifguidance_scaleis less than1). -

negative_prompt (

strorList[str], optional) — The prompt not to guide the image generation. Ignored when not using guidance (i.e., ignored ifguidance_scaleis less than1). -

guidance_scale (

float, optional, defaults to 4.0) — Guidance scale as defined in Classifier-Free Diffusion Guidance.guidance_scaleis defined aswof equation 2. of Imagen Paper. Guidance scale is enabled by settingguidance_scale > 1. Higher guidance scale encourages to generate images that are closely linked to the textprompt, usually at the expense of lower image quality.

Returns

KandinskyPriorPipelineOutput or tuple

Function invoked when using the prior pipeline for interpolation.

Examples:

>>> from diffusers import KandinskyV22PriorEmb2EmbPipeline, KandinskyV22Pipeline

>>> from diffusers.utils import load_image

>>> import PIL

>>> import torch

>>> from torchvision import transforms

>>> pipe_prior = KandinskyV22PriorPipeline.from_pretrained(

... "kandinsky-community/kandinsky-2-2-prior", torch_dtype=torch.float16

... )

>>> pipe_prior.to("cuda")

>>> img1 = load_image(

... "https://huggingface.co/datasets/hf-internal-testing/diffusers-images/resolve/main"

... "/kandinsky/cat.png"

... )

>>> img2 = load_image(

... "https://huggingface.co/datasets/hf-internal-testing/diffusers-images/resolve/main"

... "/kandinsky/starry_night.jpeg"

... )

>>> images_texts = ["a cat", img1, img2]

>>> weights = [0.3, 0.3, 0.4]

>>> image_emb, zero_image_emb = pipe_prior.interpolate(images_texts, weights)

>>> pipe = KandinskyV22Pipeline.from_pretrained(

... "kandinsky-community/kandinsky-2-2-decoder", torch_dtype=torch.float16

... )

>>> pipe.to("cuda")

>>> image = pipe(

... image_embeds=image_emb,

... negative_image_embeds=zero_image_emb,

... height=768,

... width=768,

... num_inference_steps=150,

... ).images[0]

>>> image.save("starry_cat.png")KandinskyV22CombinedPipeline

class diffusers.KandinskyV22CombinedPipeline

< source >( unet: UNet2DConditionModel scheduler: DDPMScheduler movq: VQModel prior_prior: PriorTransformer prior_image_encoder: CLIPVisionModelWithProjection prior_text_encoder: CLIPTextModelWithProjection prior_tokenizer: CLIPTokenizer prior_scheduler: UnCLIPScheduler prior_image_processor: CLIPImageProcessor )

Parameters

-

scheduler (Union[

DDIMScheduler,DDPMScheduler]) — A scheduler to be used in combination withunetto generate image latents. - unet (UNet2DConditionModel) — Conditional U-Net architecture to denoise the image embedding.

- movq (VQModel) — MoVQ Decoder to generate the image from the latents.

- prior_prior (PriorTransformer) — The canonincal unCLIP prior to approximate the image embedding from the text embedding.

-

prior_image_encoder (

CLIPVisionModelWithProjection) — Frozen image-encoder. -

prior_text_encoder (

CLIPTextModelWithProjection) — Frozen text-encoder. -

prior_tokenizer (

CLIPTokenizer) — Tokenizer of class CLIPTokenizer. -

prior_scheduler (

UnCLIPScheduler) — A scheduler to be used in combination withpriorto generate image embedding. -

prior_image_processor (

CLIPImageProcessor) — A image_processor to be used to preprocess image from clip.

Combined Pipeline for text-to-image generation using Kandinsky

This model inherits from DiffusionPipeline. Check the superclass documentation for the generic methods the library implements for all the pipelines (such as downloading or saving, running on a particular device, etc.)

__call__

< source >(

prompt: typing.Union[str, typing.List[str]]

negative_prompt: typing.Union[str, typing.List[str], NoneType] = None

num_inference_steps: int = 100

guidance_scale: float = 4.0

num_images_per_prompt: int = 1

height: int = 512

width: int = 512

prior_guidance_scale: float = 4.0

prior_num_inference_steps: int = 25

generator: typing.Union[torch._C.Generator, typing.List[torch._C.Generator], NoneType] = None

latents: typing.Optional[torch.FloatTensor] = None

output_type: typing.Optional[str] = 'pil'

callback: typing.Union[typing.Callable[[int, int, torch.FloatTensor], NoneType], NoneType] = None

callback_steps: int = 1

return_dict: bool = True

)

→

ImagePipelineOutput or tuple

Parameters

-

prompt (

strorList[str]) — The prompt or prompts to guide the image generation. -

negative_prompt (

strorList[str], optional) — The prompt or prompts not to guide the image generation. Ignored when not using guidance (i.e., ignored ifguidance_scaleis less than1). -

num_images_per_prompt (

int, optional, defaults to 1) — The number of images to generate per prompt. -

num_inference_steps (

int, optional, defaults to 100) — The number of denoising steps. More denoising steps usually lead to a higher quality image at the expense of slower inference. -

height (

int, optional, defaults to 512) — The height in pixels of the generated image. -

width (

int, optional, defaults to 512) — The width in pixels of the generated image. -

prior_guidance_scale (

float, optional, defaults to 4.0) — Guidance scale as defined in Classifier-Free Diffusion Guidance.guidance_scaleis defined aswof equation 2. of Imagen Paper. Guidance scale is enabled by settingguidance_scale > 1. Higher guidance scale encourages to generate images that are closely linked to the textprompt, usually at the expense of lower image quality. -

prior_num_inference_steps (

int, optional, defaults to 100) — The number of denoising steps. More denoising steps usually lead to a higher quality image at the expense of slower inference. -

guidance_scale (

float, optional, defaults to 4.0) — Guidance scale as defined in Classifier-Free Diffusion Guidance.guidance_scaleis defined aswof equation 2. of Imagen Paper. Guidance scale is enabled by settingguidance_scale > 1. Higher guidance scale encourages to generate images that are closely linked to the textprompt, usually at the expense of lower image quality. -

generator (

torch.GeneratororList[torch.Generator], optional) — One or a list of torch generator(s) to make generation deterministic. -

latents (

torch.FloatTensor, optional) — Pre-generated noisy latents, sampled from a Gaussian distribution, to be used as inputs for image generation. Can be used to tweak the same generation with different prompts. If not provided, a latents tensor will ge generated by sampling using the supplied randomgenerator. -

output_type (

str, optional, defaults to"pil") — The output format of the generate image. Choose between:"pil"(PIL.Image.Image),"np"(np.array) or"pt"(torch.Tensor). -

callback (

Callable, optional) — A function that calls everycallback_stepssteps during inference. The function is called with the following arguments:callback(step: int, timestep: int, latents: torch.FloatTensor). -

callback_steps (

int, optional, defaults to 1) — The frequency at which thecallbackfunction is called. If not specified, the callback is called at every step. -

return_dict (

bool, optional, defaults toTrue) — Whether or not to return a ImagePipelineOutput instead of a plain tuple.

Returns

ImagePipelineOutput or tuple

Function invoked when calling the pipeline for generation.

Examples:

from diffusers import AutoPipelineForText2Image

import torch

pipe = AutoPipelineForText2Image.from_pretrained(

"kandinsky-community/kandinsky-2-2-decoder", torch_dtype=torch.float16

)

pipe.enable_model_cpu_offload()

prompt = "A lion in galaxies, spirals, nebulae, stars, smoke, iridescent, intricate detail, octane render, 8k"

image = pipe(prompt=prompt, num_inference_steps=25).images[0]Offloads all models to CPU using accelerate, significantly reducing memory usage. When called, unet,

text_encoder, vae and safety checker have their state dicts saved to CPU and then are moved to a

torch.device('meta') and loaded to GPU only when their specific submodule has its forwardmethod called. Note that offloading happens on a submodule basis. Memory savings are higher than withenable_model_cpu_offload`, but performance is lower.

KandinskyV22Img2ImgCombinedPipeline

class diffusers.KandinskyV22Img2ImgCombinedPipeline

< source >( unet: UNet2DConditionModel scheduler: DDPMScheduler movq: VQModel prior_prior: PriorTransformer prior_image_encoder: CLIPVisionModelWithProjection prior_text_encoder: CLIPTextModelWithProjection prior_tokenizer: CLIPTokenizer prior_scheduler: UnCLIPScheduler prior_image_processor: CLIPImageProcessor )

Parameters

-

scheduler (Union[

DDIMScheduler,DDPMScheduler]) — A scheduler to be used in combination withunetto generate image latents. - unet (UNet2DConditionModel) — Conditional U-Net architecture to denoise the image embedding.

- movq (VQModel) — MoVQ Decoder to generate the image from the latents.

- prior_prior (PriorTransformer) — The canonincal unCLIP prior to approximate the image embedding from the text embedding.

-

prior_image_encoder (

CLIPVisionModelWithProjection) — Frozen image-encoder. -

prior_text_encoder (

CLIPTextModelWithProjection) — Frozen text-encoder. -

prior_tokenizer (

CLIPTokenizer) — Tokenizer of class CLIPTokenizer. -

prior_scheduler (

UnCLIPScheduler) — A scheduler to be used in combination withpriorto generate image embedding. -

prior_image_processor (

CLIPImageProcessor) — A image_processor to be used to preprocess image from clip.

Combined Pipeline for image-to-image generation using Kandinsky

This model inherits from DiffusionPipeline. Check the superclass documentation for the generic methods the library implements for all the pipelines (such as downloading or saving, running on a particular device, etc.)

__call__

< source >(

prompt: typing.Union[str, typing.List[str]]

image: typing.Union[torch.FloatTensor, PIL.Image.Image, typing.List[torch.FloatTensor], typing.List[PIL.Image.Image]]

negative_prompt: typing.Union[str, typing.List[str], NoneType] = None

num_inference_steps: int = 100

guidance_scale: float = 4.0

strength: float = 0.3

num_images_per_prompt: int = 1

height: int = 512

width: int = 512

prior_guidance_scale: float = 4.0

prior_num_inference_steps: int = 25

generator: typing.Union[torch._C.Generator, typing.List[torch._C.Generator], NoneType] = None

latents: typing.Optional[torch.FloatTensor] = None

output_type: typing.Optional[str] = 'pil'

callback: typing.Union[typing.Callable[[int, int, torch.FloatTensor], NoneType], NoneType] = None

callback_steps: int = 1

return_dict: bool = True

)

→

ImagePipelineOutput or tuple

Parameters

-

prompt (

strorList[str]) — The prompt or prompts to guide the image generation. -

image (

torch.FloatTensor,PIL.Image.Image,np.ndarray,List[torch.FloatTensor],List[PIL.Image.Image], orList[np.ndarray]) —Image, or tensor representing an image batch, that will be used as the starting point for the process. Can also accept image latents asimage, if passing latents directly, it will not be encoded again. -

negative_prompt (

strorList[str], optional) — The prompt or prompts not to guide the image generation. Ignored when not using guidance (i.e., ignored ifguidance_scaleis less than1). -

num_images_per_prompt (

int, optional, defaults to 1) — The number of images to generate per prompt. -

guidance_scale (

float, optional, defaults to 4.0) — Guidance scale as defined in Classifier-Free Diffusion Guidance.guidance_scaleis defined aswof equation 2. of Imagen Paper. Guidance scale is enabled by settingguidance_scale > 1. Higher guidance scale encourages to generate images that are closely linked to the textprompt, usually at the expense of lower image quality. -

strength (

float, optional, defaults to 0.3) — Conceptually, indicates how much to transform the referenceimage. Must be between 0 and 1.imagewill be used as a starting point, adding more noise to it the larger thestrength. The number of denoising steps depends on the amount of noise initially added. Whenstrengthis 1, added noise will be maximum and the denoising process will run for the full number of iterations specified innum_inference_steps. A value of 1, therefore, essentially ignoresimage. -

num_inference_steps (

int, optional, defaults to 100) — The number of denoising steps. More denoising steps usually lead to a higher quality image at the expense of slower inference. -

height (

int, optional, defaults to 512) — The height in pixels of the generated image. -

width (

int, optional, defaults to 512) — The width in pixels of the generated image. -

prior_guidance_scale (

float, optional, defaults to 4.0) — Guidance scale as defined in Classifier-Free Diffusion Guidance.guidance_scaleis defined aswof equation 2. of Imagen Paper. Guidance scale is enabled by settingguidance_scale > 1. Higher guidance scale encourages to generate images that are closely linked to the textprompt, usually at the expense of lower image quality. -

prior_num_inference_steps (

int, optional, defaults to 100) — The number of denoising steps. More denoising steps usually lead to a higher quality image at the expense of slower inference. -

generator (

torch.GeneratororList[torch.Generator], optional) — One or a list of torch generator(s) to make generation deterministic. -

latents (

torch.FloatTensor, optional) — Pre-generated noisy latents, sampled from a Gaussian distribution, to be used as inputs for image generation. Can be used to tweak the same generation with different prompts. If not provided, a latents tensor will ge generated by sampling using the supplied randomgenerator. -

output_type (

str, optional, defaults to"pil") — The output format of the generate image. Choose between:"pil"(PIL.Image.Image),"np"(np.array) or"pt"(torch.Tensor). -

callback (

Callable, optional) — A function that calls everycallback_stepssteps during inference. The function is called with the following arguments:callback(step: int, timestep: int, latents: torch.FloatTensor). -

callback_steps (

int, optional, defaults to 1) — The frequency at which thecallbackfunction is called. If not specified, the callback is called at every step. -

return_dict (

bool, optional, defaults toTrue) — Whether or not to return a ImagePipelineOutput instead of a plain tuple.

Returns

ImagePipelineOutput or tuple

Function invoked when calling the pipeline for generation.

Examples:

from diffusers import AutoPipelineForImage2Image

import torch

import requests

from io import BytesIO

from PIL import Image

import os

pipe = AutoPipelineForImage2Image.from_pretrained(

"kandinsky-community/kandinsky-2-2-decoder", torch_dtype=torch.float16

)

pipe.enable_model_cpu_offload()

prompt = "A fantasy landscape, Cinematic lighting"

negative_prompt = "low quality, bad quality"

url = "https://raw.githubusercontent.com/CompVis/stable-diffusion/main/assets/stable-samples/img2img/sketch-mountains-input.jpg"

response = requests.get(url)

image = Image.open(BytesIO(response.content)).convert("RGB")

image.thumbnail((768, 768))

image = pipe(prompt=prompt, image=original_image, num_inference_steps=25).images[0]Offloads all models to CPU using accelerate, significantly reducing memory usage. When called, unet,

text_encoder, vae and safety checker have their state dicts saved to CPU and then are moved to a

torch.device('meta') and loaded to GPU only when their specific submodule has its forwardmethod called. Note that offloading happens on a submodule basis. Memory savings are higher than withenable_model_cpu_offload`, but performance is lower.

KandinskyV22InpaintCombinedPipeline

class diffusers.KandinskyV22InpaintCombinedPipeline

< source >( unet: UNet2DConditionModel scheduler: DDPMScheduler movq: VQModel prior_prior: PriorTransformer prior_image_encoder: CLIPVisionModelWithProjection prior_text_encoder: CLIPTextModelWithProjection prior_tokenizer: CLIPTokenizer prior_scheduler: UnCLIPScheduler prior_image_processor: CLIPImageProcessor )

Parameters

-

scheduler (Union[

DDIMScheduler,DDPMScheduler]) — A scheduler to be used in combination withunetto generate image latents. - unet (UNet2DConditionModel) — Conditional U-Net architecture to denoise the image embedding.

- movq (VQModel) — MoVQ Decoder to generate the image from the latents.

- prior_prior (PriorTransformer) — The canonincal unCLIP prior to approximate the image embedding from the text embedding.

-

prior_image_encoder (

CLIPVisionModelWithProjection) — Frozen image-encoder. -

prior_text_encoder (

CLIPTextModelWithProjection) — Frozen text-encoder. -

prior_tokenizer (

CLIPTokenizer) — Tokenizer of class CLIPTokenizer. -

prior_scheduler (

UnCLIPScheduler) — A scheduler to be used in combination withpriorto generate image embedding. -

prior_image_processor (

CLIPImageProcessor) — A image_processor to be used to preprocess image from clip.

Combined Pipeline for inpainting generation using Kandinsky

This model inherits from DiffusionPipeline. Check the superclass documentation for the generic methods the library implements for all the pipelines (such as downloading or saving, running on a particular device, etc.)

__call__

< source >(

prompt: typing.Union[str, typing.List[str]]

image: typing.Union[torch.FloatTensor, PIL.Image.Image, typing.List[torch.FloatTensor], typing.List[PIL.Image.Image]]

mask_image: typing.Union[torch.FloatTensor, PIL.Image.Image, typing.List[torch.FloatTensor], typing.List[PIL.Image.Image]]

negative_prompt: typing.Union[str, typing.List[str], NoneType] = None

num_inference_steps: int = 100

guidance_scale: float = 4.0

num_images_per_prompt: int = 1

height: int = 512

width: int = 512

prior_guidance_scale: float = 4.0

prior_num_inference_steps: int = 25

generator: typing.Union[torch._C.Generator, typing.List[torch._C.Generator], NoneType] = None

latents: typing.Optional[torch.FloatTensor] = None

output_type: typing.Optional[str] = 'pil'

callback: typing.Union[typing.Callable[[int, int, torch.FloatTensor], NoneType], NoneType] = None

callback_steps: int = 1

return_dict: bool = True

)

→

ImagePipelineOutput or tuple

Parameters

-

prompt (

strorList[str]) — The prompt or prompts to guide the image generation. -

image (

torch.FloatTensor,PIL.Image.Image,np.ndarray,List[torch.FloatTensor],List[PIL.Image.Image], orList[np.ndarray]) —Image, or tensor representing an image batch, that will be used as the starting point for the process. Can also accept image latents asimage, if passing latents directly, it will not be encoded again. -

mask_image (

np.array) — Tensor representing an image batch, to maskimage. White pixels in the mask will be repainted, while black pixels will be preserved. Ifmask_imageis a PIL image, it will be converted to a single channel (luminance) before use. If it’s a tensor, it should contain one color channel (L) instead of 3, so the expected shape would be(B, H, W, 1). -

negative_prompt (

strorList[str], optional) — The prompt or prompts not to guide the image generation. Ignored when not using guidance (i.e., ignored ifguidance_scaleis less than1). -

num_images_per_prompt (

int, optional, defaults to 1) — The number of images to generate per prompt. -

guidance_scale (

float, optional, defaults to 4.0) — Guidance scale as defined in Classifier-Free Diffusion Guidance.guidance_scaleis defined aswof equation 2. of Imagen Paper. Guidance scale is enabled by settingguidance_scale > 1. Higher guidance scale encourages to generate images that are closely linked to the textprompt, usually at the expense of lower image quality. -

num_inference_steps (

int, optional, defaults to 100) — The number of denoising steps. More denoising steps usually lead to a higher quality image at the expense of slower inference. -

height (

int, optional, defaults to 512) — The height in pixels of the generated image. -

width (

int, optional, defaults to 512) — The width in pixels of the generated image. -

prior_guidance_scale (

float, optional, defaults to 4.0) — Guidance scale as defined in Classifier-Free Diffusion Guidance.guidance_scaleis defined aswof equation 2. of Imagen Paper. Guidance scale is enabled by settingguidance_scale > 1. Higher guidance scale encourages to generate images that are closely linked to the textprompt, usually at the expense of lower image quality. -

prior_num_inference_steps (

int, optional, defaults to 100) — The number of denoising steps. More denoising steps usually lead to a higher quality image at the expense of slower inference. -

generator (

torch.GeneratororList[torch.Generator], optional) — One or a list of torch generator(s) to make generation deterministic. -

latents (

torch.FloatTensor, optional) — Pre-generated noisy latents, sampled from a Gaussian distribution, to be used as inputs for image generation. Can be used to tweak the same generation with different prompts. If not provided, a latents tensor will ge generated by sampling using the supplied randomgenerator. -

output_type (

str, optional, defaults to"pil") — The output format of the generate image. Choose between:"pil"(PIL.Image.Image),"np"(np.array) or"pt"(torch.Tensor). -

callback (

Callable, optional) — A function that calls everycallback_stepssteps during inference. The function is called with the following arguments:callback(step: int, timestep: int, latents: torch.FloatTensor). -

callback_steps (

int, optional, defaults to 1) — The frequency at which thecallbackfunction is called. If not specified, the callback is called at every step. -

return_dict (

bool, optional, defaults toTrue) — Whether or not to return a ImagePipelineOutput instead of a plain tuple.

Returns

ImagePipelineOutput or tuple

Function invoked when calling the pipeline for generation.

Examples:

from diffusers import AutoPipelineForInpainting

from diffusers.utils import load_image

import torch

import numpy as np

pipe = AutoPipelineForInpainting.from_pretrained(

"kandinsky-community/kandinsky-2-2-decoder-inpaint", torch_dtype=torch.float16

)

pipe.enable_model_cpu_offload()

prompt = "A fantasy landscape, Cinematic lighting"

negative_prompt = "low quality, bad quality"

original_image = load_image(

"https://huggingface.co/datasets/hf-internal-testing/diffusers-images/resolve/main" "/kandinsky/cat.png"

)

mask = np.zeros((768, 768), dtype=np.float32)

# Let's mask out an area above the cat's head

mask[:250, 250:-250] = 1

image = pipe(prompt=prompt, image=original_image, mask_image=mask, num_inference_steps=25).images[0]Offloads all models to CPU using accelerate, significantly reducing memory usage. When called, unet,

text_encoder, vae and safety checker have their state dicts saved to CPU and then are moved to a

torch.device('meta') and loaded to GPU only when their specific submodule has its forwardmethod called. Note that offloading happens on a submodule basis. Memory savings are higher than withenable_model_cpu_offload`, but performance is lower.